I gave an Ignite talk at a mapping conference in New Orleans in 2014 about using machine vision techniques to automate traffic signage inventories. In those days, we were just starting to hear about the new generation of artificial intelligence (AI) so widely discussed today.

There are many words used to describe AI, usually based on the specific algorithmic approach that is implemented – such as deep learning, machine learning or neural networks. I’m not a data scientist, but I’ll talk about my real-world experiences then and now.

Back in 2014, I was engaged with a project with Washington DC’s Transportation department (DDOT) which completed a detailed street-level imagery survey of every street in Washington DC. This included all the back alleys historically used for trash removal and deliveries. So, we had lots and lots of panoramic street imagery data similar to Google. Having lots of data is key for starting any machine learning exercise.

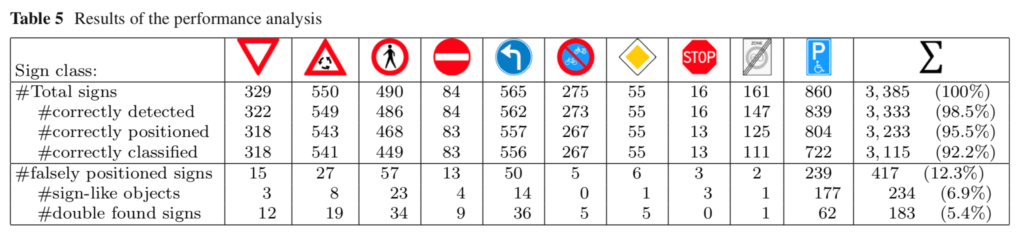

We also had several academic researchers on loan from a nearby university to test the limits of their knowledge with this data. That’s when I got the idea to approach the DDOT about an automated signage detection pilot project. The results of this pilot we encouraging and similar to tests on street imagery in Europe shown below (Table 5). My colleagues published their results here.

Fast forward 4 years, and I am now leading product strategy for HxGN Content Program focusing on several types of geospatial data, particularly aerial imagery because we’ve got petabytes of it stored online that has been collected over the last 5 years. It’s beyond what a human can look at, so machine learning becomes necessary and very useful for base map creation (roads, trees, buildings, etc.). We’re making it widely available for analysis and many companies have started to train their deep learning solutions with this data, including making high-definition maps for autonomous navigation and 3D cities models for government.

What have I learned about deep learning through the years?

● You never get 100% detection

● You need lots a training data and lots people training it

● You need sharp data scientists to tweak the algorithms

● You need management or clients that understands the limitations

● You need people to finish the job.

So, I entitled my talk back in 2014 “The Machines are Learning but We’re Still Smarter” and it’s true even today. We’ve made lots of progress for sure but there’s a lot more work to do to train our algorithms to the point where they can train themselves and truly get smarter.

I encourage you to take a look at the HxGN Content Program’s coverage and datasets and use your smarts to extract intelligence from one of the largest online aerial imagery libraries.